AI 3D Holdout Mattes for Pro VFX Compositing: Save Time

Revolutionizing occlusion and depth integration in professional pipelines with Tripo AI

文档信息

| 版本 | 动作 | 责任人 |

|---|---|---|

| 1.0 | 文档创建 | 王磊 |

Manual rotoscoping for holdout mattes traditionally drains visual effects budgets and extends critical delivery deadlines. Complex spatial interactions and intersecting scene elements require precise occlusion that standard two-dimensional splines struggle to maintain during dynamic camera movements.

By integrating rapid 3D generation into the 2026 pipeline, compositors can instantly deploy geometrically accurate proxy meshes to block out foreground subjects and seamlessly integrate complex computer-generated layers in professional film production.

Key Insights

- Parallax Accuracy: Transitioning from two-dimensional splines to 3D spatial mattes guarantees accurate parallax and precise depth occlusion during camera movements.

- Instant Conversion: Modern generation algorithms allow compositors to instantly convert still reference frames into structural 3D models for immediate pipeline integration.

- Efficiency Gains: Combining static 3D proxy geometry with point cloud tracking eliminates hours of manual rotoscoping.

- Refined Integration: Generated geometry requires standard edge-feathering and motion blur matching to achieve feature-film quality sub-pixel integration.

The Evolution of Holdout Mattes in VFX Compositing

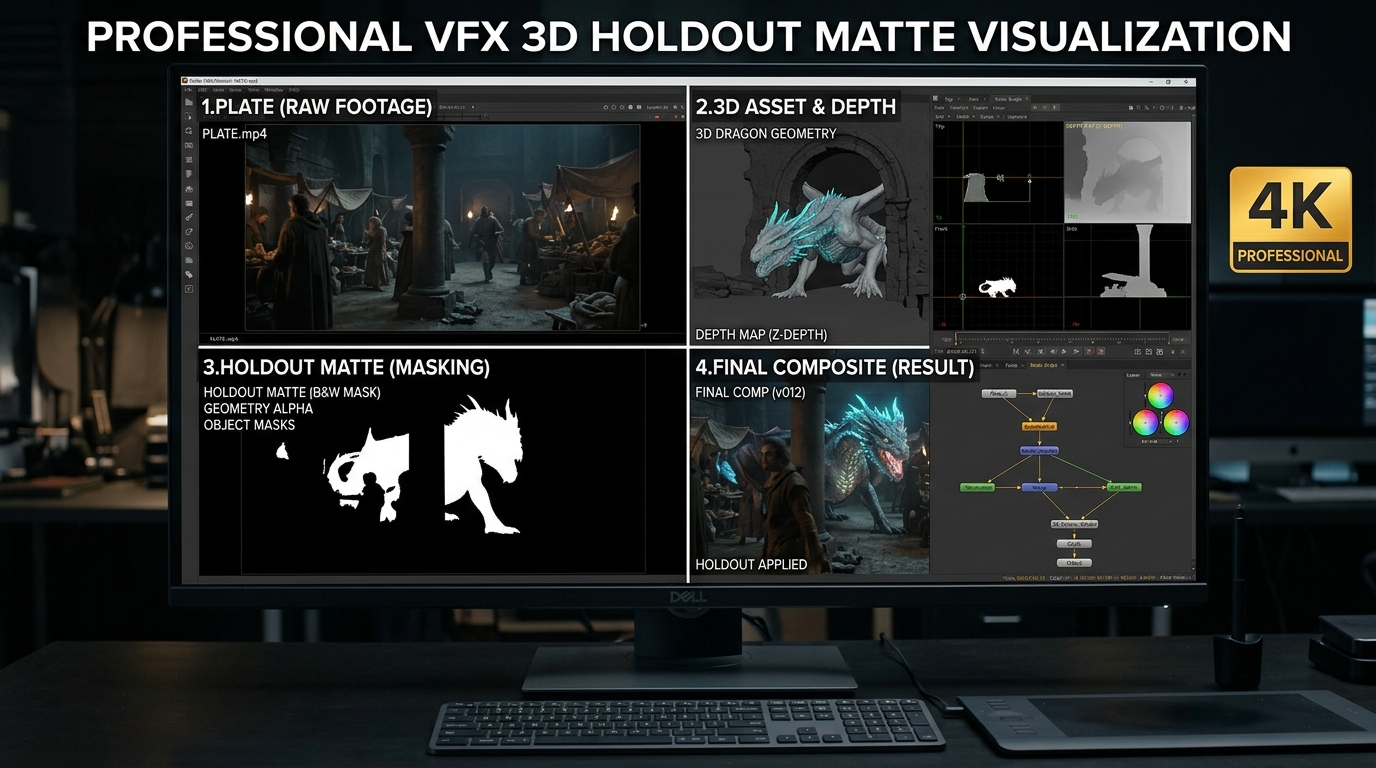

Holdout mattes are essential masking tools used to block out foreground elements, allowing background computer-generated layers to sit correctly in three-dimensional space. While traditionally reliant on time-consuming manual rotoscoping, 2026 workflows utilize rapid AI 3D generation to provide an instant, geometrically accurate alternative for professional compositors.

Traditional Rotoscoping vs. 3D Spatial Mattes

Historically, creating a holdout matte required an artist to manually draw and animate vector splines around a foreground subject frame by frame. The industry shift toward converting 2D images to 3D models has fundamentally altered how studios handle complex occlusion. Instead of approximating volume with flat shapes, compositors now drop a physical geometric representation of the subject directly into the scene.

Using Tripo AI to Generate 3D Geometry for Mattes

Detailing the step-by-step process of using Tripo AI to instantly convert 2D reference frames into structural 3D models reveals a highly efficient workflow.

Extracting Reference Frames for AI Generation

To generate an accurate proxy mesh, the compositor first isolates a clean, high-resolution reference frame from the live-action plate. Because professional compositing demands precise spatial volume, the underlying generation process utilizes Algorithm 3.1 to interpret the two-dimensional pixel data and infer precise volumetric depth.

Recommended Export Formats for DCC Integration

Once the geometry is generated and verified, selecting the proper format is critical. Tripo AI supports exporting the asset as USD, FBX, OBJ, STL, GLB, and 3MF. If a specific pipeline requires specialized software bridges, technical directors can utilize 3D format conversion protocols to seamlessly switch between GLB and OBJ files.

Integrating AI 3D Models into Professional Nuke/Blender Workflows

Importing Tripo AI models into standard visual effects tools like Nuke or Blender requires accurate alignment between the proxy geometry and the established camera track.

Camera Tracking and Geometry Alignment

In software like Foundry's Nuke or Blender, the compositor tracks the live-action plate to generate a virtual camera. The generated proxy mesh is then imported and aligned. Implementing this method typically reduces total retopology and matte generation time from 6 hours to under 45 minutes.

Advanced Techniques: Handling Motion and Complex Edges

Addressing edge cases such as moving subjects and extreme motion blur is crucial for feature-film quality.

For subjects with complex deformations, technical artists can utilize an automated skeleton to quickly bind the geometry to a rig, allowing the proxy mesh to be animated to match the moving subject precisely.

FAQ

1. How accurate is the edge detail of an AI 3D model holdout matte compared to manual roto?

A: Generated 3D models provide accurate spatial occlusion and rigid boundaries. To match the precision of manual roto at the sub-pixel level, compositors must process the resulting alpha channel through standard edge-feathering and motion blur nodes.

2. Can I use Tripo AI models for animated holdout mattes?

A: Yes. For rigid body motion, parent the static model to a 3D tracked null. For complex deformations, the mesh can be rigged using AI auto-rigging tools to match moving subjects frame by frame.

3. Which 3D format is recommended for importing Tripo AI mattes into Nuke?

A: USD and OBJ are highly recommended. Nuke's 3D system handles USD files with exceptional efficiency, while OBJ remains a stable fallback for raw structural geometry.